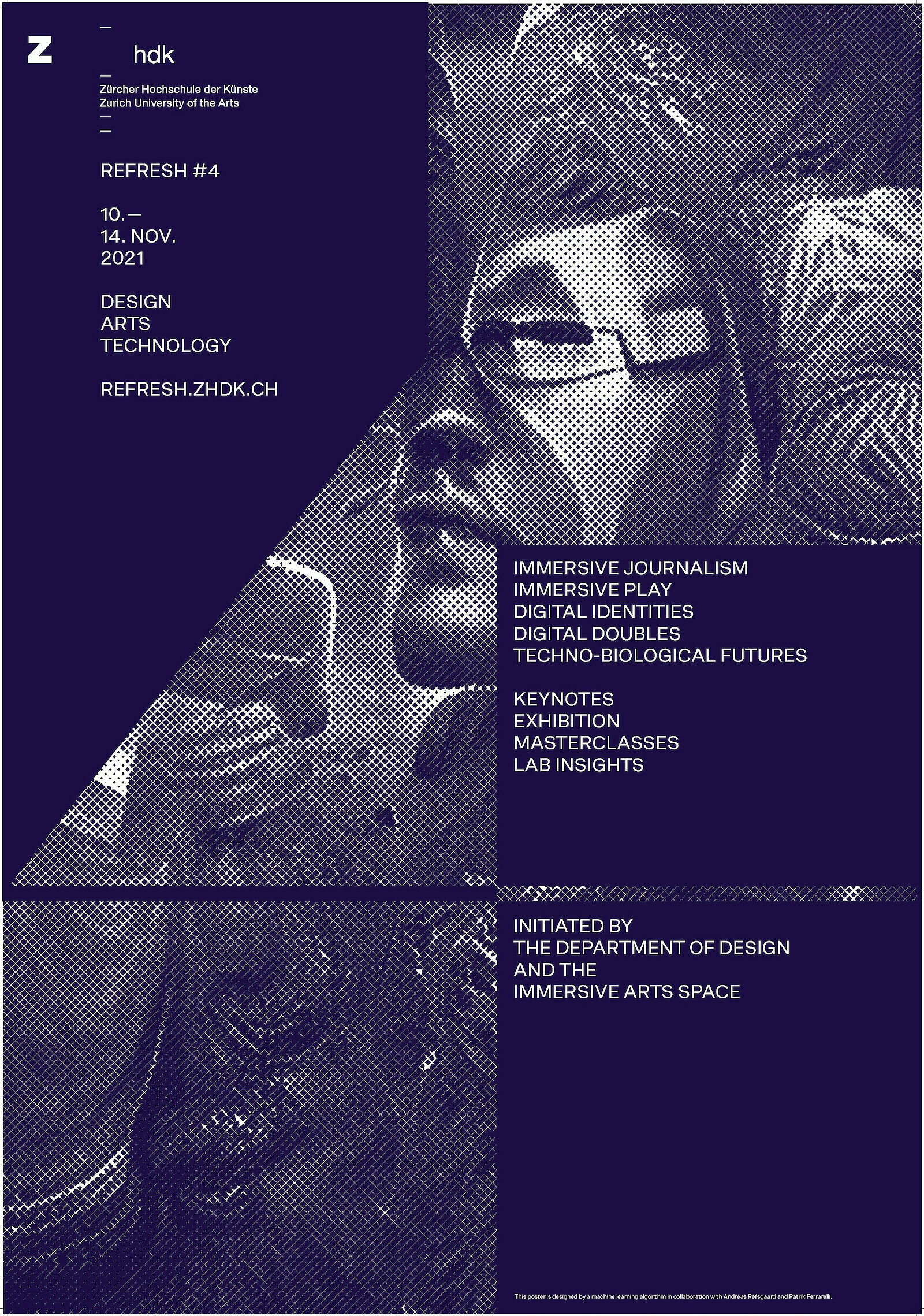

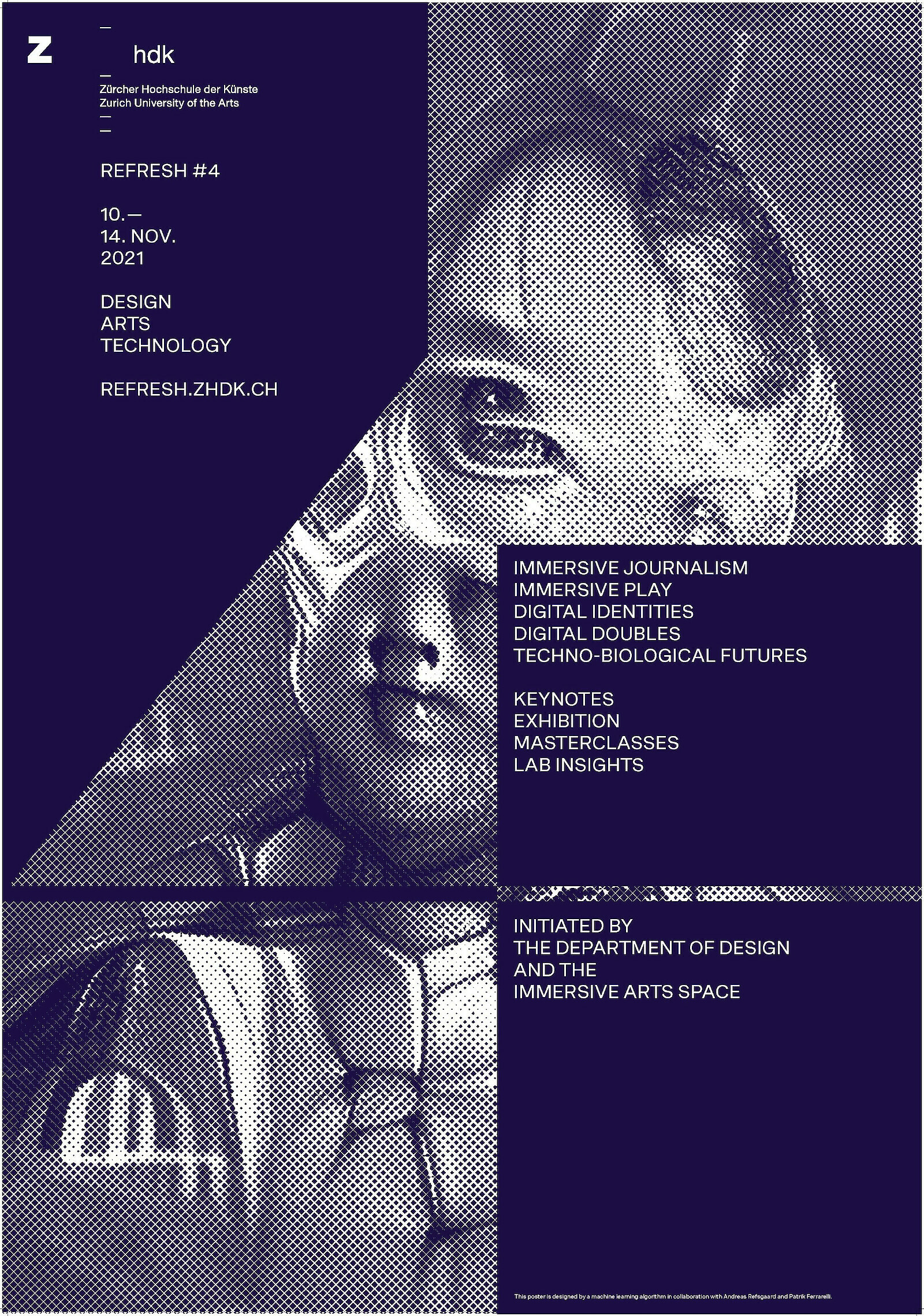

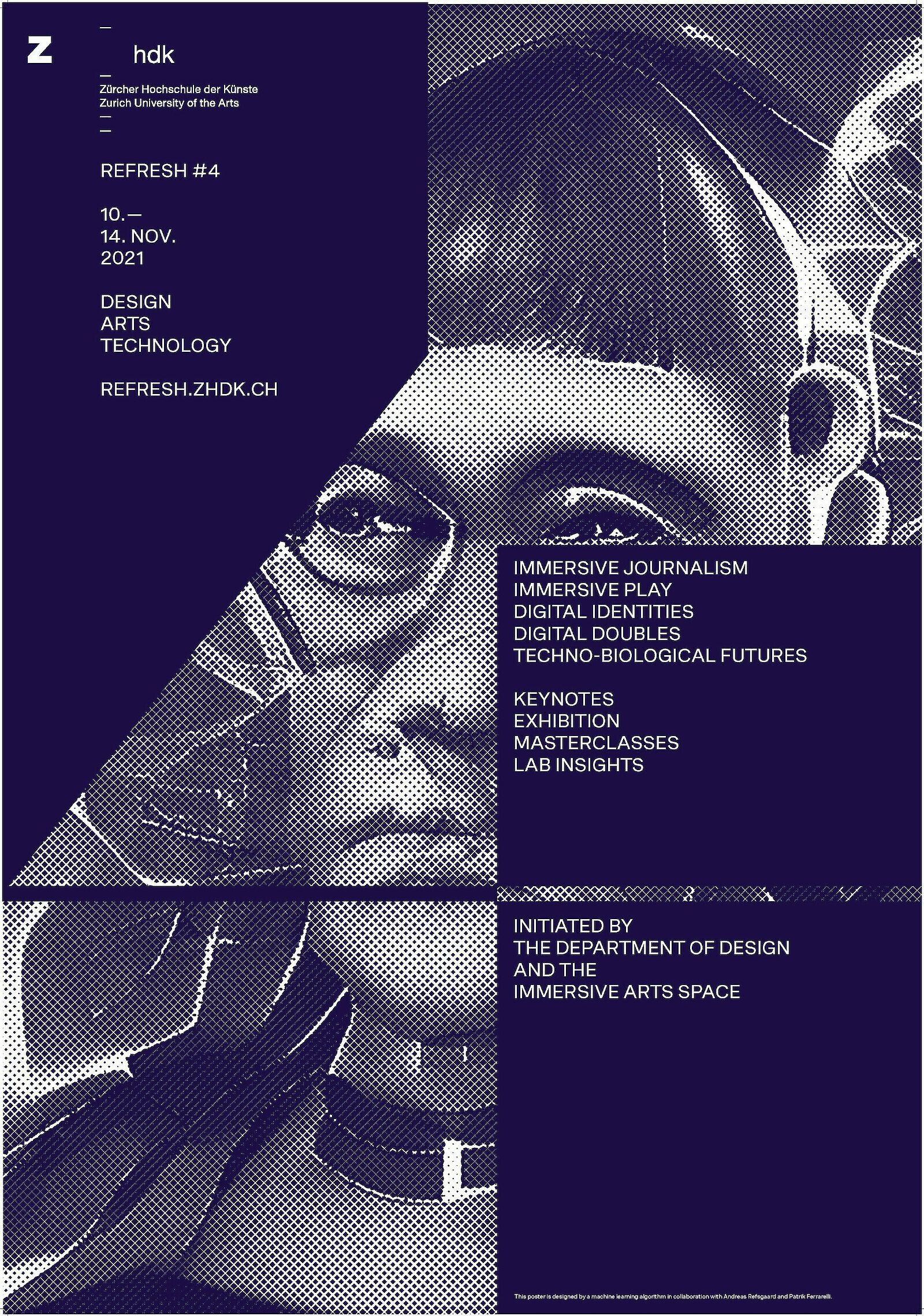

REFRESH #4 Poster Design

For this year's digital identity of REFRESH the graphic designer Patrik Ferrarelli teamed up with interaction designer Andreas Refsgaard and a machine-learning algorithm. Using CLIP, a machine learning model trained by OpenAI to determine which text best fit a given image, in combination with generative machine learning models, Andreas generated a series of uncanny images around the keywords of REFRESH. Graphic designer Patrik Ferrarelli used these images as graphical elements in this year's posters and visuals for the web.

The text prompts came from this year's keywords for the REFRESH festival and included simple text snippets like «Immersive journalism», «Immersive Play», «Digital identities» or «Techno-biological futures». From a technical perspective, Andreas used a combination of techniques initially developed by Ryan Murdoch and Katherine Crowson.

Simply put, CLIP guides a generator (like VQGAN, BigGAN or StyleGAN) to generate an image that corresponds to a given text:

«CLIP is a model that was originally intended for doing things like searching for the best match to a description like «a dog playing the violin» among a number of images. By pairing a network that can produce images (a «generator» of some sort) with CLIP, it is possible to tweak the generator’s input to try to match a description.» (Source: Ryan Murdochon Github)

Although perhaps more uncanny than realistic, the outputs ended up suiting this year's REFRESH keywords.

Want to try it out for yourself?

Below are the Google Colab notebooks used for this project:

Notebooks used:

colab.research.google.com by Ryan Murdoch

colab.research.google.com by Katherine Crowson

colab.research.google.com by Bearsharktopusdev

Further reading:

Thoughts on DeepDaze, BigSleep, and Aleph2Image: here

VQGAN+CLIP — How does it work?: here